US unable to rationally view China’s technological strides

When it comes to China's latest advancement in chips, Bloomberg reported on Tuesday that "it won't be surprising ... The US can always tighten its sanctions regimes and strengthen the safeguards to slow the proliferation. But commerce will almost always force out technological secrets." This seems to be a habitual reluctance of the US to face up to China's technological advancement, who believes that China's capabilities are not yet up to par, and can only develop relying on others' intellectual property or technical secrets.

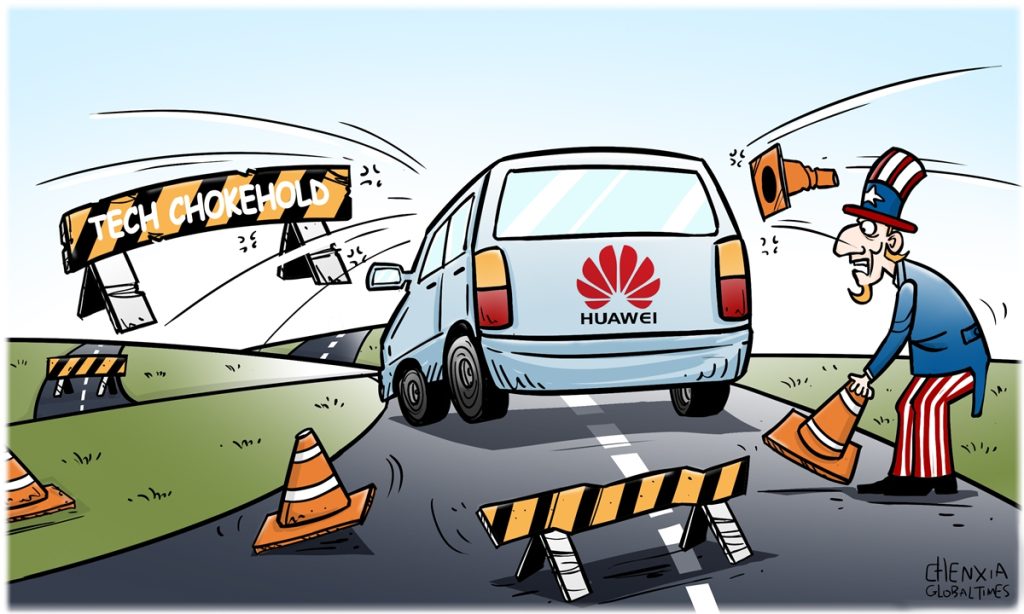

Essentially, such view looks at technological progress of the world from a racist perspective, as if the slight technological progress of other nations is due to theft or the US' leaked secrets; otherwise, it's impossible for other nations to innovate. But in fact, China's investment in research and development, represented by Huawei, has been world-leading over the years.

In this article, Bloomberg also cites examples to prove that "no one has a monopoly on innovation." China was once advanced in techniques concerning silk, papermaking and porcelain, but they were eventually introduced to the West. Thus, the breakthrough of Huawei's semiconductor is merely part of "a long history of the spread — or theft — of what we now call intellectual property." Is the US media thinking about that such interpretation from the view of history can make the readers better accept the so-called "theft of intellectual property?"

Globalization has brought the proliferation of knowledge and some technologies around the world. However, everyone who masters technology wants to control it, and there are rare cases of active technology shares. Not to mention the complete patent laws and intellectual property laws to protect the interests of inventors in the modern society.

In this regard, Lü Xiang, a research fellow at the Chinese Academy of Social Sciences, told the Global Times, "If a country wants to achieve development through the natural spread of technology, it is either very difficult, or it is meaningless to wait until the technology is backward."

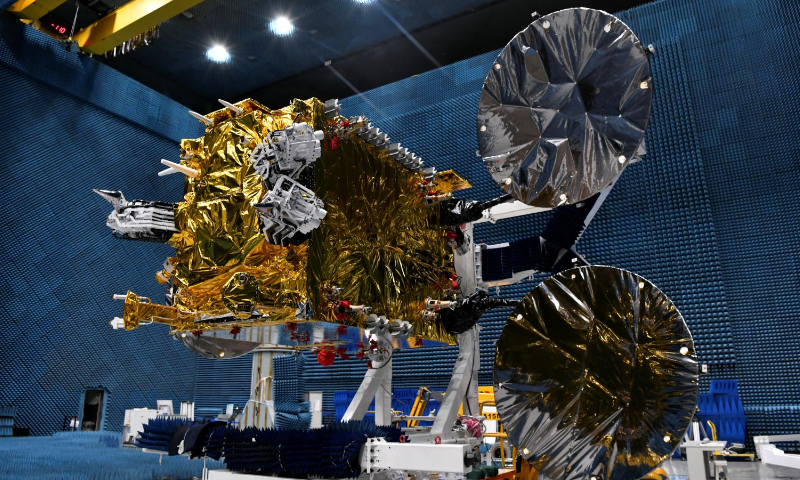

Although the US has imposed various technological blockades on China, China still relies on its own efforts to continuously make breakthroughs.

On the contrary, the US, the largest monitoring and espionage country, keeps stressing the protection of intellectual property rights, while employing hegemonic means to suppress advanced companies in other countries.

It is in essence contradictory that the American media criticizes China's independent innovation as "misguided attempts" and "belligerence," and advocates "technical blockade" at the same times. Of course, the US wants to maintain its hegemony that is reflected in all aspects, including technology, but no country can restrict the development of new technologies by companies in another country, and no company in the world can become world-leading through theft.

Over the years, China's technology advancement has been astonishing, and has even surpassed that of Western countries in many fields. It has aroused many doubts from these countries, suspecting that China has secretly stolen their technology and trying to discredit China. These countries are purely envy of China, and also underestimate China.

Lü believes that China and Chinese companies including Huawei, have developed some technologies that are more advanced than that of American companies. The US neither has an edge in chip manufacturing nor in craftsmanship. We will prove that the high walls they have built are ultimately ineffective. Because what China's technical progress relies on is the leading manpower and material investment, rather than the leaked information of the US. How can China steal the technology that the US does not have at all?

Moreover, the author also mentioned that "If China and the US continue to use trade and technology in a zero-sum game of world domination, we are all likely to end up on the zero end of the equation." In fact, what the US does is not just zero-sum game, but negative-sum game. Because zero-sum harms others and benefits oneself, negative sum harms others but brings no benefit to oneself.

Some technical patents are actually mutually beneficial. For instance, electronic products manufactured in many countries include Huawei's patents and technologies, while some parts of Huawei may also use Western technologies and products. It is a driving force of technological progress in the world.

But if the US continues its bandit logic, it will only go nowhere. In the end, all countries are interconnected in the era of globalization, which determines that this kind of robber thinking will not work anymore. Jointly promoting the development of science and technology through cooperation is also a trend that the US can't stop.

"As to what choice the US government will make, we still have to wait. We can't expect the US decision-makers to always be smart, especially for the current administration," Lü added.