Hackneyed cliches

The developer of an unmanned suspension railway has finished its phase I construction and started testing on Monday in Shanghai, the latest step in intelligent monorail testing in China.

The Baoshan demonstration line project finished its 400-meter-long phase I construction and started testing, aiming to offer passengers a new experience of traveling with a sense of technology.

Designed by EPN Skytrain Development Co, the demonstration line project, with a designed length of 940 meters, has two stations and one repair facility with a maximum speed of 60 kilometers per hour.

In line with the development trend of intelligent and unmanned urban rail transit in Shanghai, the system is equipped with a Grade of Automation 4 autonomous train operation system, the highest level in the industry.

Putting unmanned intelligent technology on a suspension railway is an innovative move in the industry, and it shows the developer's high-level development capability for intelligent systems, Sun Zhang, a railway expert from Shanghai Tongji University, told the Global Times on Monday.

Founded in 2018, the company introduced a German prototype system that had a safe operation history of nearly 40 years, after five years of independent research and development. The localization rate of the system has exceeded 90 percent, reported news outlet thepaper.cn.

Unlike traditional railway systems, suspension railway systems offer greener transportation while using less land and costing less money. They also give passengers a better view of the city, said Sun.

On April 27, 2006, Shanghai unveiled a maglev train, which was also the first maglev line in China. With German technology, the train was put into use on a 30-km track between downtown area and Shanghai Pudong International Airport.

Dutch semiconductor equipment maker ASML started its 2024 campus recruitment program in China on Tuesday, with key positions related to scanners, e-beams and computational lithography.

The recruitment program this year, which is about the same size as that of last year, shows that the company is staying committed to the Chinese market, despite geopolitical headwinds that are affecting the global chip supply chain, a Chinese analyst said.

The company, which had net global sales revenue of 21.2 billion euros ($22.46 billion) in 2022, said it plans to hire some 200 professionals this year, roughly the same as last year, indicating steady growth in its Chinese business.

"The continuous hiring by ASML at this critical juncture implies the company's confidence in China's vast market and its unwillingness to lose market share here," Xiang Ligang, director-general of the Beijing-based Information Consumption Alliance, told the Global Times on Sunday.

"Even if sales for certain machines are blocked in the future, the company will still need employees to maintain its existing fleet of lithography machines in China and service customers," Xiang said.

Under new Dutch export control regulations that took effect on September 1, the company is required to have licenses to continue shipments of chip tools to China.

The company said it has the required licenses for China-bound shipments of the NXT:2000i and subsequent systems until the end of 2023.

On June 30, the Dutch government announced a ministerial order restricting exports of certain advanced semiconductor equipment, a move widely believed to target China due to pressure from the US.

ASML sells about 80 Deep Ultraviolet Lithography machines to China each year, accounting for around 15 percent of the company's revenue, an analyst said.

Isolating China completely through export controls is not a viable approach, ASML CEO Peter Wennink emphasized during an interview.

China and the Netherlands have maintained communication on chip equipment export controls and China has urged the Netherlands not to abuse export control measures regarding semiconductor products, according to China's Ministry of Commerce.

In 2000, the Dutch giant that makes lithography machines established ASML China and built its first office in the country. After 23 years of development, the company now has more than 1,600 employees and 16 offices in China.

The Jakarta-Bandung High-Speed Railway (HSR), the first HSR in Indonesia and Southeast Asia, officially began operation on Monday.

The high-speed line, a landmark project under the China-proposed Belt and Road Initiative (BRI), connects Indonesia's capital Jakarta and another major city Bandung. Observers said it will have a demonstration effect for future BRI developments in Southeast Asia.

Indonesian President Joko Widodo on Monday declared the official operation of the Jakarta-Bandung HSR at Halim Station in Jakarta, the Xinhua News Agency reported.

At the ceremony, Widodo announced the name of the HSR - "Whoosh" - inspired by the sound of the train, saying that the high-speed train marks the modernization of Indonesia's transportation system, which is efficient, environmentally friendly and integrated with other public transportation networks, Xinhua reported.

The Indonesian Transportation Ministry issued an operating license Friday to PT Kereta Cepat Indonesia-China (KCIC), a consortium of Indonesian and Chinese firms responsible for developing and operating the Jakarta-Bandung HSR line.

From September 7 to 30, the high-speed railway conducted trial operations, having offered free rides to local residents, according to media reports.

A spokesperson of the China Railway No.4 Engineering Group Co told the Global Times in September that ridership of the HSR could exceed 10 million trips in the first year of operation.

China Railway No.4 Engineering Group Co participated in the construction of the rail line.

Connecting Jakarta, the capital of Indonesia, and Bandung, the fourth-largest city in Indonesia, the Jakarta-Bandung HSR is 142 kilometers long and has a maximum design speed of 350 kilometers per hour. It will cut the journey between the two cities from 3.5 hours to just 40 minutes.

The HSR passes through the hinterlands of West Java province and has several stops including Halim, Karawang, Padalarang and Tegalluar.

The grand opening of the HSR received a warm welcome from the locals, who see the project as a symbol of national pride and a dream come true.

Grace Jessica, an Indonesian assistant director at the Tegalluar station of the Jakarta-Bandung HSR, told the Global Times that the "beautiful day" for a rapid ride has finally arrived. "Before the opening, many friends asked me for train tickets, and my family also longs for a chance to get on board," she noted.

As the HSR becomes a reality, Zhang Chao, executive director of the board of KCIC, told the Global Times that his feelings could be compared to "sitting the national college entrance exam," and he is excited to see eight years of hard work pay off, while having a sense of responsibility to ensure the line operates smoothly.

The Jakarta-Bandung HSR is the first time that Chinese high-speed railway technology was implemented in an all-round way outside of China, with the whole system, all elements and entire industrial chain.

Chinese Ambassador to Indonesia Lu Kang told the Global Times in a recent interview that in the long term, the HSR will further optimize the local investment environment, increase job opportunities, drive commercial and tourism development along the line, and even create new growth points to speed up the building of an HSR economic corridor.

China’s space technology was deeply applied in the country’s various industries in 2022, forming an all-weather remote sensing monitoring system for infrastructure including all sea areas and islands under its jurisdiction, the China Aerospace Science and Technology Corporation (CASC) said on Wednesday during the release of the Blue Book of China Aerospace Science and Technology Activities.

China has developed a series of satellites for ocean color, marine dynamics and surveillance, which have formed the capability of continuously and frequently covering observations of global waters, and have achieved remarkable results in applications in areas including island management, marine resource investigation and supervision, marine environmental monitoring and forecasting.

In 2022, China's marine satellites continued to carry out remote sensing inspections of key islands and reefs. In particular, they strengthened monitoring of the waters around Huangyan Island, Diaoyu Island and all the islands of Xisha, Zhongsha and Nansha Islands, providing important data support for the management of sea areas and comprehensive management of the islands.

China's marine satellites also continued to carry out remote sensing detection of key islands and reefs in 2022, in particular strengthening the monitoring of the waters around Huangyan Island, Diaoyu Island, as well as the Xisha, Zhongsha and Nansha Islands, providing a significant basis for the utilization of waters and coastal islands, the report noted.

In addition, China’s marine satellites are also carrying out global ocean observation and forecasting, providing services for global marine dynamic environment monitoring, marine forecasting and disaster monitoring, as well as remote sensing monitoring of global sea level changes.

China's marine satellites have successfully provided important data and technical support for monitoring and warnings for fires, typhoons and storm surges at home and abroad.

Lin Mingsen, director of the National Satellite Ocean Application Service, said China will further strengthen the integration of artificial intelligence, big data and other technologies with satellite remote sensing systems, so as to provide high-quality marine satellite public service products and promote the level of marine management in China.

China's Ministry of Industry and Information Technology (MIIT) vowed to accelerate the research and development of core technologies in 6G, optical communication and quantum communication to support the country's industry digitalization.

Zhao Zhiguo, spokesperson for MIIT, said during a press conference held on Thursday that China's information communication industry saw stable growth in the first quarter of 2023 as revenue for businesses in the sector - including internet data centers, cloud computing and internet of things - increased by 24.5 percent year-on-year.

China's telecommunications revenue hit 425.2 billion in the first three months of 2023, up 7.7 percent year-on-year, and the business volume also saw an 18 percent year-on-year increase, said Zhao.

By the end of March, China had built 2.64 million 5G base stations across the country, and the number of 5G mobile phone users passed 620 million as the 5G network kept expanding to rural areas as well as city management, intelligent traffic and mobile payment, MIIT data showed.

For the next step, the MIIT vowed to achieve breakthroughs in key technologies for 6G, optical communication and quantum communication, as well as enhancing the research and development of cutting-edge areas including artificial intelligence and block chain. It will also further secure the stability of industrial chains and supply chains.

In addition, MIIT will expand the 5G and broadband network for information consumption and residential livelihoods to support industry digitalization.

Analysis of large amounts of dinosaur and bird fossils has suggested that the evolution of primitive birds was slow and the diversity of body shapes dropped, which is opposite to the common belief that quick and major changes occur when a new species is taking shape.

The discovery was made by Wang Min and Zhou Zhonghe from the Institute of Vertebrate Paleontology and Paleoanthropology at the Chinese Academy of Sciences. An article about the research has been published by Nature Ecology & Evolution, a sub-journal of Nature.

Vertebrate evolution from dinosaurs to birds was an epic moment in natural history and the process involved many changes in bones, muscle and skin, which are related to flying, according to a press release the institute sent to the Global Times on Monday.

One of the most notable changes was in body shape, represented by the length of the limb bones. Theropod dinosaurs, which are closer to birds in the evolutionary tree, have relatively long forelimbs. Therefore, a systematic quantitative analysis of the dynamic evolutionary trajectory of limb bones during the origin of birds is key to understanding the important transition from "dinosaurs running on land" to "dinosaurs (birds) flying in the blue sky."

Researchers established a model to analyze the limb bones of avialans (birds), non-avialan paravians (dinosaurs similar to but not the same as birds) and non-paravian theropods, finding that diversity of avialans was the lowest while for non-paravian theropods it was the highest. An estimate of limb bone evolution speed indicated the evolution "slowed down" among avialans, or primitive birds.

Analysis also found two other indexes, which represented the flying pattern and cursorial pattern, were also the lowest among birds, indicating a low evolution speed.

These findings go against the common sense that the diversity and evolution speed increase at an epic evolutionary juncture.

One hypothesis is that birds' forelimbs can only have limited changes within the aerodynamic frame, and many characteristics related to flying had already appeared among theropods.

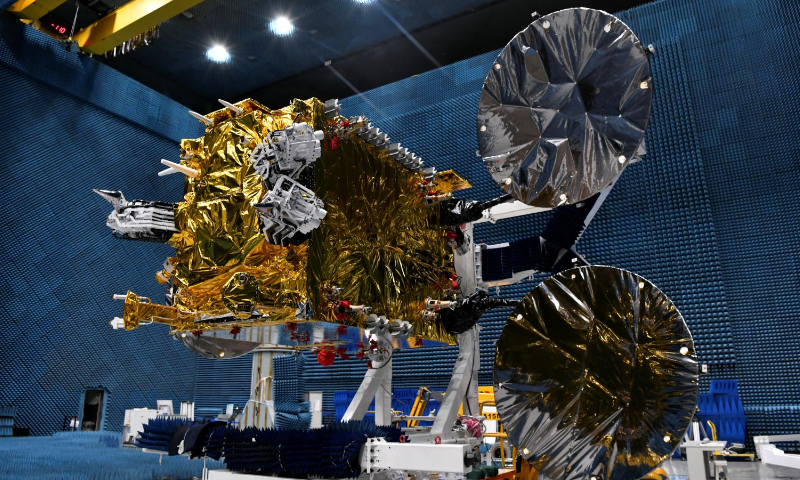

China's Hong Kong Special Administrative Region (HKSAR) has newly launched the city's first and one of the world's largest satellite manufacturing facilities, known as AMC. The facility revealed to the Global Times in an exclusive interview that their first made-in-Hong Kong, high-quality satellite would hopefully be delivered by the beginning of 2024.

Referred also as the ASPACE Hong Kong Satellite Manufacturing Center under the HK Aerospace Technology Group, the AMC, was launched on July 25, marking an important milestone in the development of the city's aerospace technology industry.

AMC is located at the Tseung Kwan O Industrial Estate and covering a site area of 200,000 square feet (approximately 18,580 square meters,) or three and a half football fields, the center hosts 18 subsystems and over 200 sets of equipment, covering various comprehensive production line equipment including satellite overall structure, optical calibration, vibration, mechanical performance, electromagnetic compatibility, thermal control, and precision, etc. It can provide the most comprehensive system production support for satellites and various related aerospace products before they leave the factory, AMC said in responding to the Global Times' inquiries via email.

According to AMC, at the early stage following its launch, its main products include remote sensing satellite constellation (both optical and radar,) key payloads such as synthetic aperture radar (SAR) and optical cameras, etc., used to obtain more detailed and accurate Earth observation data.

AMC would also provide customized technologies, including developing customized products according to specific use requirements, such as carbon monitoring satellites, meteorological satellites, etc., for monitoring and forecasting in specific fields.

AMC will also work to manufacture communication satellite constellation to provide global communication services and meet the communication needs of governments and commercial companies, navigation enhancement satellites to provide more accurate and reliable navigation positioning services, meeting the navigation needs of transportation departments and individual users, and multi-functional integrated satellites that integrate communication, navigation, and remote sensing functions to provide various application services.

The Hong Kong-based satellite manufacturing center said their main customers include government departments such as the China Meteorological Administration, environmental protection departments, transportation departments, commercial companies in the fields covering land asset management, carbon trading, ESG service products, research institutions including universities, key astronomical research laboratories, and remote sensing application laboratories, and individual users.

"The AMC satellites procurement and satellite applications require close cooperation with the mainland, especially in areas such as production line research and development, satellite product upgrades, satellite launch and orbit control, and supplier solutions," the group explained in the email it provided to the Global Times.

It is worth noting that China successfully launched the Golden Bauhinia-3, -4 and -6 satellites via the Long March-2D carrier rocket from Taiyuan Satellite Launch Center on January 15, 2023. Those satellites are developed by the HK Aerospace Technology Group.

The group has successfully launched 12 satellites for its Golden Bauhinia Constellation project so far and plans to manufacture and launch the remaining satellites under the Golden Bauhinia Constellation project during the period from the end of 2023 to 2026.

The constellation is an active-passive hybrid low-orbit high-frequency satellite constellation that combines optical remote sensing and synthetic aperture radar to form an all-weather and near-real-time dynamic monitoring system.

AMC highlighted that after the comprehensive deployment of our Golden Bauhinia satellite constellation, they will consider user groups in the Greater Bay Area as an early priority and provide long-term satellite data application services to support its smart city construction, environmental governance, climate monitoring, and other key areas.

Chinese space observers hailed on Monday that as the country's space strengths have advanced to the first-class tier worldwide, science and research institutions such as universities in the Hong Kong SAR could fully play their part by keeping up the country's momentum, fully displaying their basic research and innovation capabilities, especially in the aerospace domain.

It is important for Hong Kong to play its due part as the innovation center and forerunner in the Greater Bay Area, which is in line with the national positioning of the city, Song Zhongping, a Hong Kong-based space watcher and TV commentator, told the Global Times on Monday.

In return, Hong Kong could bridge and improve international cooperation in space with the China as the city does in other fields, he added.

Also, as an international hub, Hong Kong could launch their satellites not only from the Chinese mainland, but also from overseas. The robust aerospace development could bring forth new economic growth and inject impetus to the city, Song noted.

The target market for ASPACE Hong Kong Satellite Manufacturing Center is expected to grow to $30 billion by 2027, according to the Hong Kong-based South China Morning Post.

Sun Dong, Secretary for Innovation, Technology and Industry of the HKSAR government, said that the centre will be the most advanced satellite manufacturing centre in Asia in the next three to five years.

In fact, both the HKSAR and China's Macao Special Administrative Region have become increasingly involved in the country's major space program.

According to the China Manned Space Agency (CMSA) on May 29, the selection of the fourth group of taikonauts, China's new generation of astronauts, is proceeding as planned and will be completed by the end of this year, and more than 100 candidates then entered the second round, including more than 10 from Hong Kong and Macao.

The selection process was launched in 2022 and will result in 12 to 14 reserve taikonauts being picked, each with different specialisms, such as spacecraft pilots, flight engineers and payload specialists, per the CMSA's previous comments.

South China’s Shenzhen vowed on Wednesday to intensify its crackdown on ill-intentioned speculation and smears against private businesses among its newly 20-point measures to boost the private economy, according to Shenzhen Fabu, the official WeChat account of the Shenzhen Government Information Office.

The move marks a prompt response from local authorities to implement the comprehensive guidelines recently issued by the central government to support the private sector.

According to the measures, Shenzhen will step up efforts to combat deliberate speculation, rumors, and defamation against private enterprises and entrepreneurs. The city will also crack down on "online blackmouths" in accordance with the law to create a favorable social atmosphere that respects and supports the growth of private entrepreneurs.

The city will also actively promote leading private enterprises in emerging fields such as new energy vehicles, artificial intelligence, and new energy storage. Moreover, it will foster national and provincial-level characteristic industrial clusters for small and medium-sized enterprises.

To strengthen financing support for private enterprises, Shenzhen will establish a 5 billion yuan ($685 million) fund to hedge risks in loans to small and micro enterprises and reduce the guarantee fee rate for financing these enterprises by government financing institutions to below 1 percent.

Efforts will also be made to support private companies in expanding the overseas market and participating in overseas projects brought by opportunities from the Belt and Road Initiatives and the Regional Comprehensive Economic Partnership.

Shenzhen is home to a long list of renowned private firms such as Huawei, Tencent, and BYD. Its private sector has been one of the most dynamic in major Chinese cities, playing an outsized role in the city’s economy, according to Shenzhen Daily.

By the end of 2022, there were 2.379 million private companies in Shenzhen, accounting for 97 percent of the city’s overall firms. The private economy comprised 55.9 percent of the city’s GDP, according to the report.

The island of Taiwan's "mainland affairs council" recently released a "plan" to "resume" cross-Straits tourism and exchanges, but such a plan is a "pie in the sky" as it actually imposes stricter restrictions for exchanges, Zhu Fenglian, spokesperson for the Taiwan Affairs Office of the State Council, said on Friday.

Taiwan's "mainland affairs council" announced on Thursday that it will "loosen restrictions" on business travelers from the Chinese mainland. Tour groups from the mainland are allowed to visit the island but with a maximum of 2,000 people per day. The resumption of group tours will begin in a month but no specific date was given, according to media on the island.

Zhu depicted the "plan" to "resume" cross-Straits exchanges as "a pie in the sky" created by the Democratic Progressive Party (DPP) authorities, as it claims to "resume" but actually refuses to lift the ban, and claims to "relax" but actually imposes stricter restrictions on cross-Strait exchanges.

The spokesperson noted that the DPP's so-called plan has set three ignominious records in the history of cross-Strait exchanges, as it imposes unprecedented restrictions on people from the island of Taiwan traveling to the mainland in group tours, unprecedented regulation of Taiwan's tourism industry, and unprecedented restrictions on companies on the island to invite mainland personnel to Taiwan for exhibitions and business exchanges.

Zhu said that the Chinese mainland has always opened its doors and warmly welcomed compatriots from the island of Taiwan to travel to the mainland without any limits on the number of people. It is absurd that the DPP now wants to control the number of cross-Straits tourists based on the so-called "principle of reciprocity" and require Taiwan's tourism associations to establish a mechanism to regulate the number of tourists to the mainland.

"People can't help but ask, since the lifting of martial law in Taiwan in 1987, people on the island have never encountered any obstacles when traveling to the mainland. Why are the DPP authorities so afraid of people coming to the mainland? Do they want to bring Taiwan back to the state of martial law?" said Zhu, noting that the DPP authorities are trying in vain to use the so-called "principle of reciprocity" as an excuse to shift blame and responsibility.

The DPP's "plan" precisely regulates the exhibition booth area for companies on the island of Taiwan, and restricts the length of stay for mainland personnel in the island to one day. It also sets different quotas for invitation restrictions. The strictness and meticulousness of the restrictions on companies in the island and mainland personnel going to the island Taiwan are astonishing, said Zhu.

The so-called plan is clearly a barrier disguised as "relaxation," said Zhu, noting that the DPP authorities have spared no effort to come up with absurd and unpopular measures to obstruct and restrict normal cross-Straits exchanges.

The desire for communication, cooperation, peace, and development is the common aspiration of the people on the island of Taiwan. The DPP authorities are trying to deceive and cover up their true intentions of obstructing exchanges and interactions between the island and the mainland with their so-called "plan," said Zhu.

We believe that compatriots in the island can see through their intentions and will not be deceived. We hope that compatriots on both sides can work together to promote the return of cross-Straits relations to the correct track of peaceful development and truly achieve the normalization and regularity of cross-Strait tourism and two-way exchanges, said Zhu.